Zimage Turbo Beats FLUX 2: Local AI Image Generation

Meet Tongyi/Alibaba’s Zimage Turbo: stunning local AI image results with sharp anatomy. See examples and get the ComfyUI workflow that outshines FLUX 2.

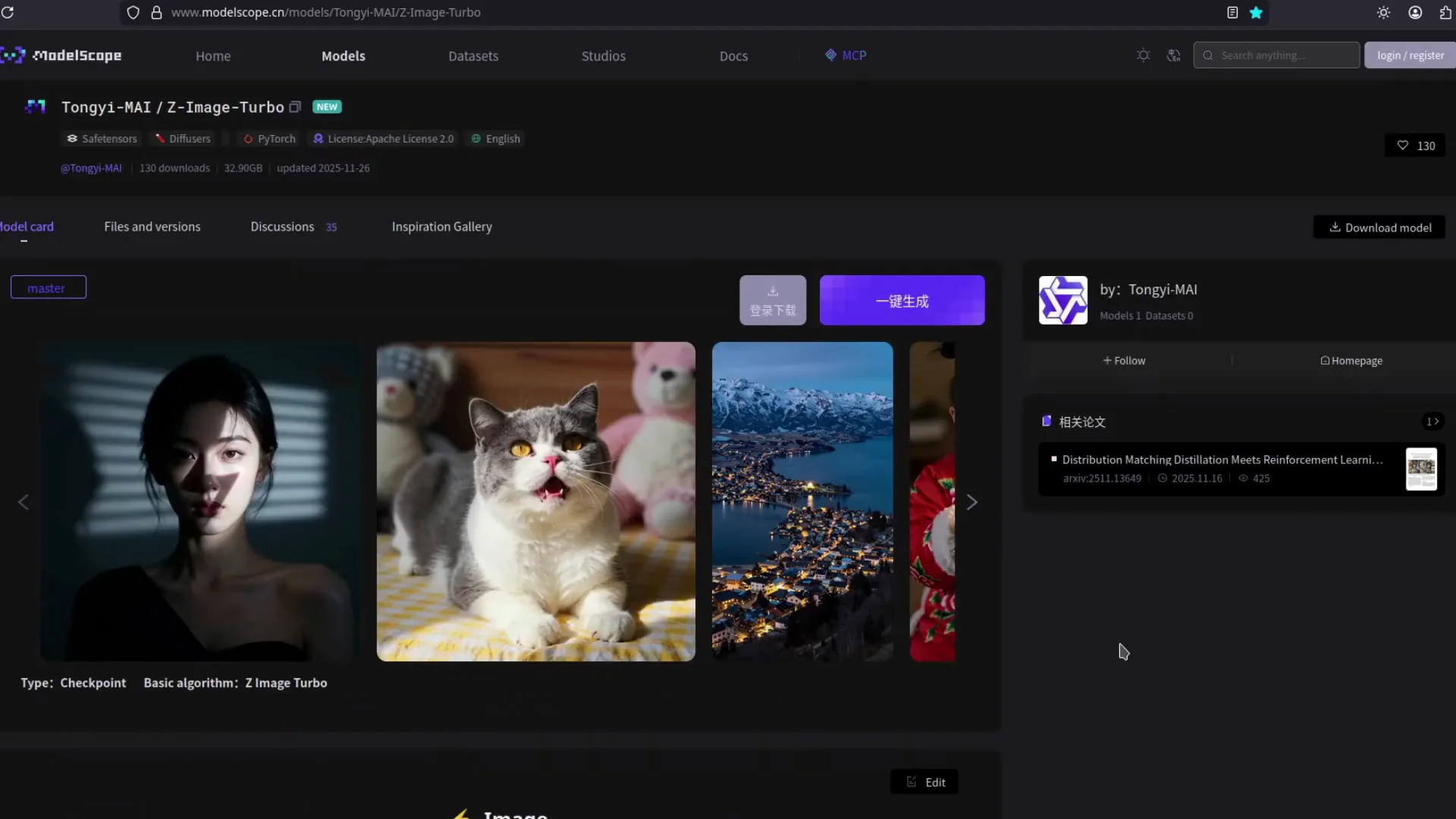

A 6-billion-parameter image generation model designed for efficient few-step sampling, strong image quality, and bilingual English–Chinese text rendering on practical hardware.

Z-Image Turbo is part of the Z-Image project, which focuses on efficient large-scale image generation. The core idea is simple: it is possible to build a 6-billion-parameter foundation model for images that delivers strong performance without relying on very large model sizes or very long sampling schedules. Z-Image Turbo is the distilled variant for fast text-to-image generation.

The model uses a single-stream diffusion transformer architecture where text tokens, semantic tokens, and image tokens share one transformer. This approach keeps the design compact and makes good use of parameters. Through systematic optimization and distillation, Z-Image Turbo runs in a small number of steps while keeping stable image quality.

One of the main design goals is practical deployment. Z-Image Turbo runs on consumer graphics cards with less than 16 GB of VRAM and still produces images that are competitive with much larger systems. This allows more people and smaller teams to experiment with high-quality image generation without a large infrastructure budget.

| Component | Description |

|---|---|

| Z-Image (Base) | The 6B-parameter foundation model for image generation. It shows that strong image quality can be achieved without very large parameter counts by focusing on careful data curation, training design, and a single-stream transformer over text and image tokens. |

| Z-Image Turbo | A distilled model for fast text-to-image generation. It keeps the main strengths of the base model and reduces the number of sampling steps to around eight diffusion updates while maintaining strong photorealistic quality and bilingual text rendering. |

| Z-Image Edit | A continued-training variant aimed at image editing. It accepts an input image and structured instructions, then produces edited results that stay consistent with the original content while following the requested changes. |

| Decoupled-DMD | The distillation approach behind Z-Image Turbo. It separates classifier-free guidance augmentation from distribution matching, which makes it easier to tune stability and few-step performance. |

| DMDR | A training method that combines distribution matching with reinforcement learning style feedback to improve semantic alignment, structure, and fine detail in a few-step model. |

Many diffusion-based image generators rely on long sampling schedules, which push latency and hardware demands up. Z-Image Turbo explores a different direction. It keeps the number of function evaluations small while aiming for stable, predictable quality. This is possible due to careful distillation from the base Z-Image model using Decoupled-DMD.

The training process separates the effect of classifier-free guidance from the rest of the distillation objective. In practice, this means that the mechanism that strengthens the connection between prompts and images can be tuned independently from the part that matches the teacher distribution. The result is a model that converges to a stable few-step sampler more directly.

A further layer, DMDR, adds feedback signals that resemble reinforcement learning. Scores based on human preference, structure, or aesthetics can guide training while distribution matching keeps the updates regularized. This balance helps Z-Image Turbo produce images with sharper details and better semantic alignment without increasing the number of sampling steps.

Because the full pipeline is focused on both speed and quality, Z-Image Turbo is well aligned with real deployment needs. It suits interactive tools, creative applications, and experimentation on standard GPUs, where short latency and controlled memory use matter as much as raw image scores.

Z-Image Turbo uses a single transformer that takes text tokens, high-level semantic tokens, and image tokens in one sequence. This simple design improves parameter usage because the same network reasons about language and image content together. It also keeps the code path more compact, which is helpful for optimization and deployment.

The distilled model operates in roughly eight diffusion updates while still aiming for stable image quality. For users, this means short wait times between entering a prompt and seeing results. In many cases the experience feels close to real-time on suitable hardware, even at higher resolutions such as 1024 by 1024 pixels.

Z-Image Turbo is trained to render both English and Chinese text into images with consistent structure. This includes dense prompts with mixed language content. The model is able to place and draw characters in a way that respects the layout in the prompt, which is useful for posters, titles, diagrams, and other image types that combine graphics with written content.

With around six billion parameters and an efficient diffusion schedule, Z-Image Turbo fits on graphics cards with less than 16 GB of VRAM by using mixed precision and memory optimization features in the diffusion library. This gives hobbyists, researchers, and smaller organizations a chance to run a strong model on hardware they already own.

The project provides model weights and code under the Apache 2.0 license. Users can study how the training and sampling pipelines are assembled, adapt the model to new datasets, or integrate Z-Image Turbo into their own tools and services while keeping full control over their infrastructure.

You can experiment with prompt design, text rendering, and composition directly in the interactive demo below. The interface is hosted on a Hugging Face Space and connects to the Z-Image Turbo pipeline. Enter a prompt, adjust controls, and observe how the model responds to different descriptions.

The demo may take a short time to start if the underlying space is waking up. Once it is ready, you can test short prompts, bilingual text prompts, and more structured instructions to see how Z-Image Turbo behaves across different situations.

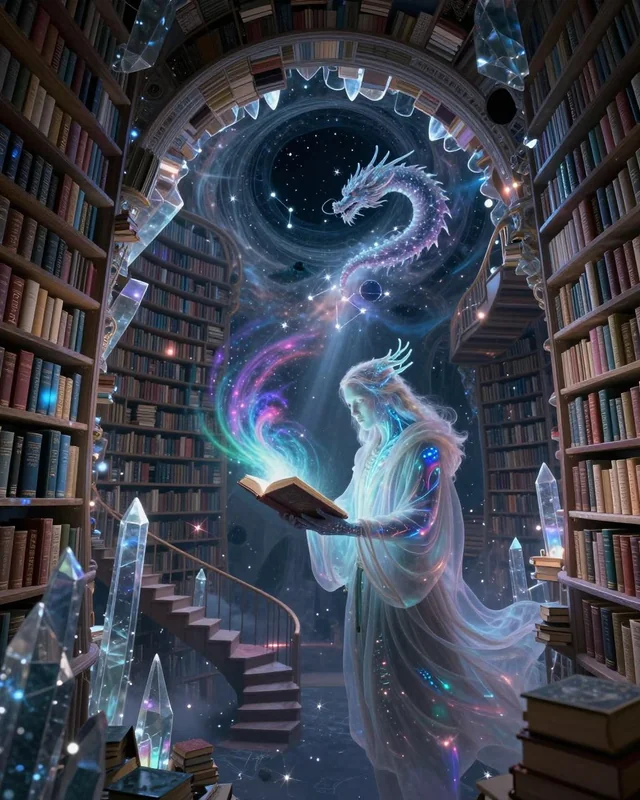

Explore stunning examples generated by Z-Image Turbo. These images showcase the model's capabilities in photorealism, artistic composition, and detail rendering across various subjects and styles.

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

Generated with Z-Image Turbo

8 NFEs • High Quality Output

All images were generated using Z-Image Turbo with minimal prompt engineering.

Experience the power of efficient, high-quality AI image generation on consumer hardware.

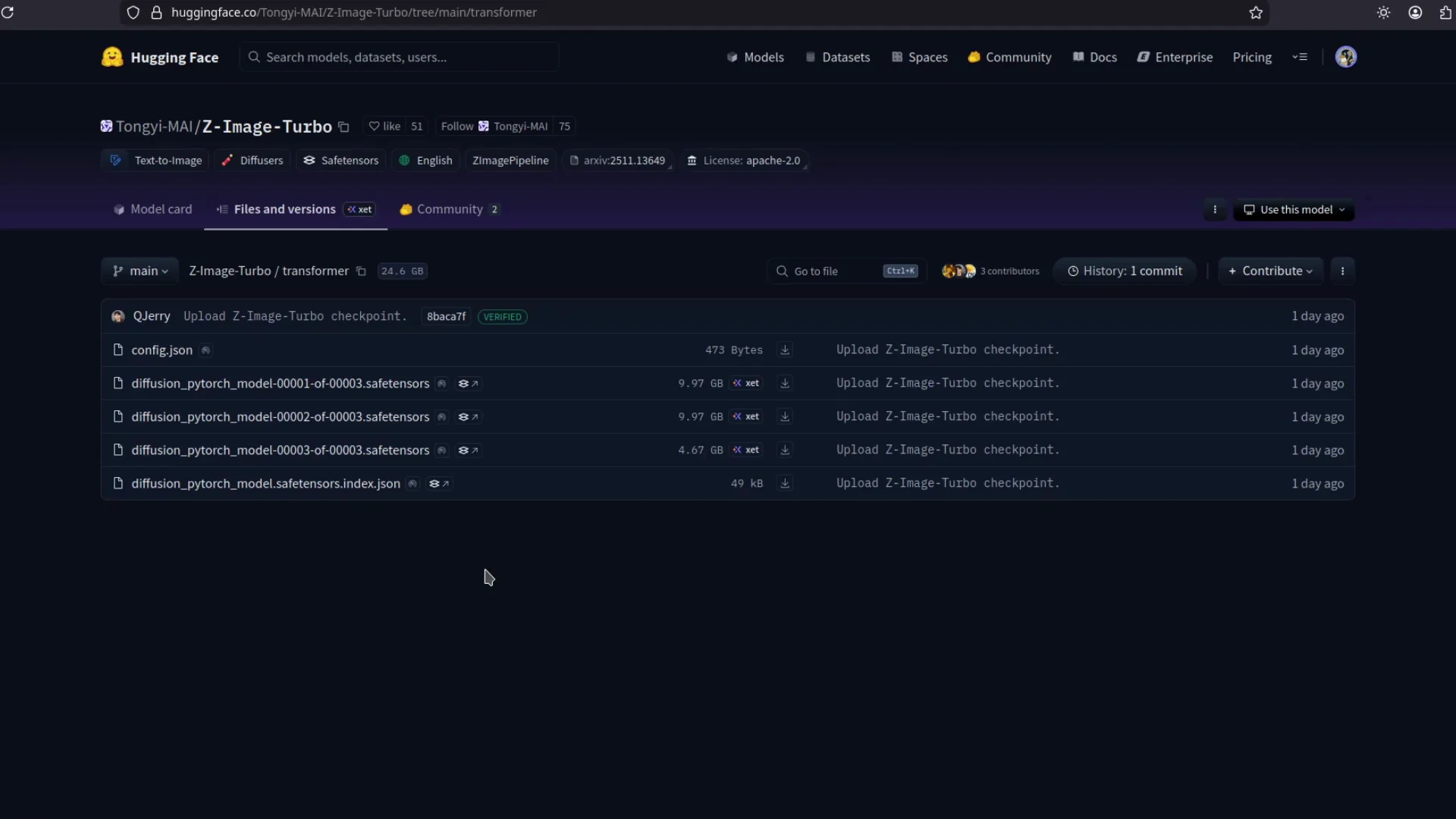

Installing Z-Image Turbo locally is based on the standard diffusion library workflow. The main steps are installing the library, preparing a recent PyTorch version with GPU support, and loading the Z-Image Turbo pipeline by name. Once that is done, a short script is enough to generate images from text prompts.

For a more detailed installation guide, you can refer to the dedicated Installation page in this site. It walks through environment preparation, common flags for memory-constrained devices, and sample scripts for both simple command-line usage and integration into larger applications or services.

# Install the latest diffusers from source for Z-Image support

pip install git+https://github.com/huggingface/diffusers

# Typical environment setup

pip install torch --index-url https://download.pytorch.org/whl/cu124

pip install transformers accelerate safetensorsAfter the environment is ready, you can load the pipeline using the dedicated ZImagePipeline class provided by the diffusion library. The example below shows a minimal script that generates a single image with fixed seed and eight diffusion iterations.

import torch

from diffusers import ZImagePipeline

pipe = ZImagePipeline.from_pretrained(

"Tongyi-MAI/Z-Image-Turbo",

torch_dtype=torch.bfloat16,

low_cpu_mem_usage=False,

)

pipe.to("cuda")

prompt = "Young woman in red traditional clothing, clear facial details, soft evening lighting, city background, bilingual signboard text."

image = pipe(

prompt=prompt,

height=1024,

width=1024,

num_inference_steps=9, # about 8 DiT forwards

guidance_scale=0.0,

generator=torch.Generator("cuda").manual_seed(42),

).images[0]

image.save("z_image_turbo_example.png")The same pattern can be adapted to batch prompts, prompt templates, or simple web services. The Installation page gives further details on optional features such as attention backends, model compilation, and CPU offloading.

Create applications where users type descriptions and receive images in a short time. The few-step nature of Z-Image Turbo supports interactive workflows such as sketching ideas, refining compositions, or exploring different variations for a concept.

Use bilingual text rendering to design posters, covers, and layouts that combine English and Chinese content. Z-Image Turbo follows structured prompts that describe placement, type, and content for the text areas.

Study the behavior of Decoupled-DMD and DMDR on a real model. Z-Image Turbo offers a practical reference for how distillation and preference-based objectives can be combined to form strong few-step generators.

Even though this homepage is focused on Z-Image Turbo, the project also includes an editing variant. Together, they cover both prompt-based generation and modification of existing content, making it easier to design full creative workflows.

Meet Tongyi/Alibaba’s Zimage Turbo: stunning local AI image results with sharp anatomy. See examples and get the ComfyUI workflow that outshines FLUX 2.

Learn how to run Z-image Turbo on low‑VRAM GPUs and generate high‑quality images in just 8 steps. Simple workflow, key settings, and tips for speed and quality.

Learn how to create stunning comic pages and manga-style art with Z-Image Turbo. This guide includes full prompts, hardware requirements, and step-by-step instructions for generating multi-panel comic layouts with AI.

Z-Image-De-Turbo: A de-distilled variant of Z-Image-Turbo for flexible training, LoRA development, and extended experimentation without adapters.